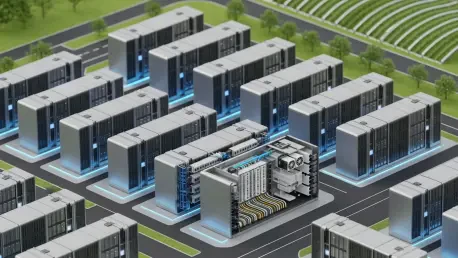

The global digital landscape is currently witnessing a massive capital infusion where a projected three trillion dollars will be poured into infrastructure over the next five years to sustain the insatiable appetite of artificial intelligence. This staggering financial commitment marks a fundamental departure from the era of bespoke, one-off construction projects that once defined the industry. As the demand for hyperscale computing reaches unprecedented heights, the transition toward standardized, modular frameworks has become a strategic imperative. This guide examines the best practices for implementing modularity, emphasizing how industrialization serves as the primary solution for a sector grappling with the limits of traditional building methods.

The Strategic Shift Toward Modular Data Center Infrastructure

The transition from custom-built facilities to modular systems represents more than a change in architecture; it is a wholesale evolution in how digital capacity is delivered. Historically, data centers were treated as unique engineering challenges, often resulting in long lead times and inconsistent performance metrics. However, the rise of high-density AI workloads necessitates a shift toward repeatability to ensure that infrastructure can keep pace with software innovation. By adopting a productized approach, developers can move away from the “site-as-a-laboratory” mindset and toward a more efficient manufacturing model.

Best practices in modular design are now essential for navigating the explosive requirements of the hyperscale era. This guide explores the core tenets of this shift, focusing on how standardized components allow for rapid deployment without sacrificing the integrity of the mission-critical environment. The discussion centers on the three pillars of modern infrastructure: speed to market, financial predictability, and the industrialization of the entire construction lifecycle. Each of these elements contributes to a more resilient and scalable digital ecosystem.

Why Adopting Modular Best Practices Is Essential for Growth

Moving away from traditional construction methods is no longer just a preference but a necessity for modern digital infrastructure. Conventional building processes are notoriously susceptible to delays, often caused by the complexities of on-site coordination and the unpredictability of local weather or labor conditions. In contrast, modularity introduces a level of control that is impossible to achieve through site-built methods alone. This shift allows organizations to bypass the bottlenecks of the past and embrace a more agile development strategy.

The benefits of modularity extend across several operational dimensions, including compressed project timelines and significantly improved cooling efficiency. Because modules are designed to house specific server configurations, the physical security and thermal management systems can be tailored for maximum performance. Moreover, standardization helps mitigate the risks associated with labor shortages and supply chain volatility. By utilizing pre-assembled components, developers reduce their reliance on a diminishing pool of on-site specialized trades, ensuring that growth is not stifled by external market pressures.

Implementing Modular Design: Actionable Steps and Best Practices

The core principles of repeatable data center construction involve breaking down the facility into clear, industrial steps. This requires a philosophical pivot from “occupant-centric” architecture to “hardware-centric” functionalism. In a traditional office building, the comfort of humans is the priority; in a modern data center, the “occupants” are the server racks. Every design choice must therefore prioritize power density and thermal regulation. This functionalist approach ensures that every square foot of the facility is optimized for the technological assets it houses.

Prioritizing Speed to Market Through Off-Site Fabrication

Implementing pre-engineered, one-megawatt modules manufactured in controlled environments is the gold standard for rapid deployment. By shifting the bulk of the construction work to a factory setting, developers can ensure higher quality control and more consistent output. This method allows for concurrent work streams where site preparation and foundation laying occur simultaneously with the assembly of the actual data halls. Such parallel processing significantly reduces the overall construction schedule, often by several months.

Case Study: Hyperscale Deployment and the Compressed Timeline

A major cloud provider recently demonstrated the power of this approach by utilizing standardized modules to bypass lengthy design phases. Faced with an immediate need for regional capacity, the provider avoided the usual eighteen-month build cycle by deploying pre-fabricated units that were ready for connection upon arrival. This strategy allowed the organization to meet market demand in half the time required for a traditional build, proving that modularity is the most effective tool for meeting the fast-paced requirements of the digital economy.

Achieving Cost Certainty via Standardized Procurement and Design

Using a repeatable blueprint creates significant economies of scale in equipment purchasing. When a developer utilizes the same specifications across multiple projects, they gain the leverage to negotiate better terms with suppliers and secure long-term inventory. This consistency eliminates the need for custom architectural elements, which are often the primary source of mid-project corrections and financial surprises. By simplifying the design, the focus remains on the core functionality of the facility, ensuring that resources are allocated where they matter most.

The Impact of “Project Cost Certainty” on Global Expansion

The ability to accurately predict costs across multiple geographic sites is a transformative advantage for global developers. For example, a standardized HVAC and power configuration allowed one developer to replicate a high-efficiency design across three continents with minimal financial variance. This “cost certainty” enabled the firm to secure funding more easily and expand its footprint without the risk of the budget overruns that typically plague international construction projects. Consistency in design translated directly into consistency in the bottom line.

Leveraging AI and Continuous Commissioning for Quality Control

The use of AI-driven reference libraries ensures that every modular unit meets exact interior specifications and cooling requirements. These intelligent systems analyze vast amounts of performance data to recommend the most efficient layouts for power distribution and airflow. Furthermore, real-time monitoring plays a crucial role in identifying and addressing construction defects during the build process. This “continuous commissioning” means that any issues are caught and corrected before the module leaves the factory, resulting in a nearly flawless installation on-site.

Real-World Impact: Precision Engineering in High-Density AI Clusters

In high-density AI clusters, even a minor misalignment in airflow can lead to significant equipment failure. Intelligent modeling recently prevented such a scenario by optimizing the spatial orientation of server racks based on historical thermal performance data. By simulating thousands of cooling scenarios before a single module was built, the engineers ensured that the hardware would operate at peak efficiency under the most intense workloads. This level of precision engineering is only possible within the controlled framework of modular design.

Evaluating the Industrialized Future of Data Centers

The industry’s collective movement toward predictable industrialization proved to be the only viable path for sustaining growth during the recent surge in artificial intelligence. This transition required a fundamental shift in how stakeholders viewed their assets, moving from the creation of bespoke monuments to the production of repeatable industrial products. Developers who embraced this mindset found that they were better equipped to handle the demands of hyperscalers, as their processes were optimized for the rapid, high-quality output that the modern market demanded.

Organizations that prioritized sustainability and rapid scalability benefited the most from this systemic change. The modular framework allowed for better tracking of environmental metrics and more efficient use of resources, which aligned perfectly with global carbon-reduction goals. Ultimately, the successful players in the infrastructure space were those who recognized that the era of the custom-built data center had passed. By adopting standardized best practices, they secured a future where digital infrastructure was as flexible and resilient as the technologies it supported.